Today was the 11th Annual Design Project Symposium for students from the Electrical and Computer Engineering Department at the University of Waterloo to display their projects. I’m fortunate that the Davis Centre at UW is on the walk to lunch. Doubly fortunate that my good friend Rumeesa Khalid reminds me of cool upcoming events in and around campus. Thanks R!

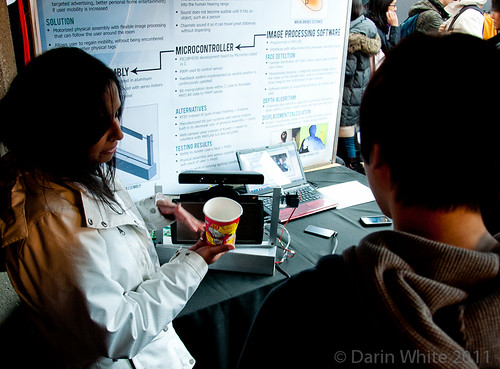

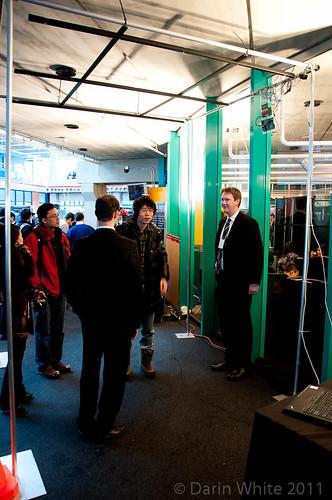

This project was really cool: a Kinect for tracking objects was mated to a servo-articulated pan and tilt mechanism that held a hyper-sonic sound speaker, which focuses a tight beam of sound. The idea is that beam of sound could target and follow a user within a space.

I always find it most interesting to talk to the students about what didn’t go quite right. As I understand it, the noise and crowded activity of this venue proved challenging for the Kinect to maintain a target lock on a human face. So the team adjusted the demo to follow this coffee cup. They also found MatLab to be sub-optimally slow for processing the Kinect data. And of course they have good ideas about how to solve these problems.

Ryan Fox! I’ve known Ryan through his many co-op terms at RIM.

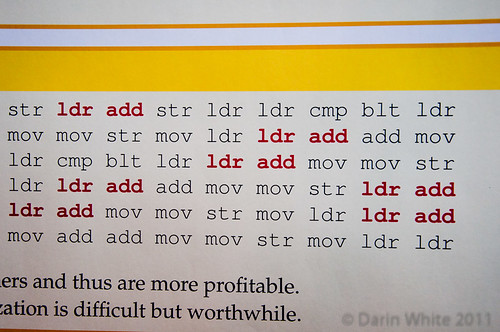

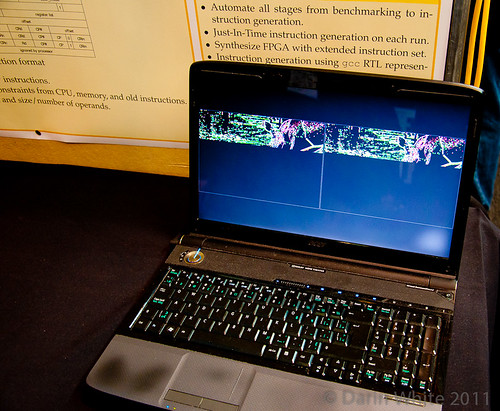

Ryan’s team built an analyzer that would assess domain-specific code for possible optimizations and suggest new processor instructions. In this case an image edge detection binary has a lot of load/add (in red) that could be condensed into one instruction by fabbing a new chip, or modifying an FPGA to include your new instruction.

10% speed-up of the edge detection in the simulator on the left versus the one on the right. Great job, Ryan, and good to see you.

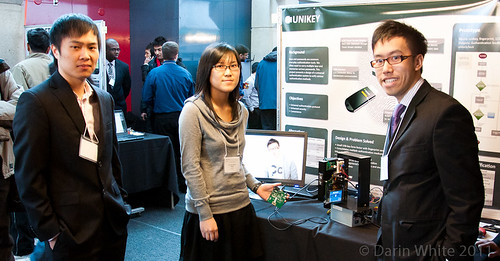

This team had an interesting take on a security system that incorporated a fingerprint scanner.

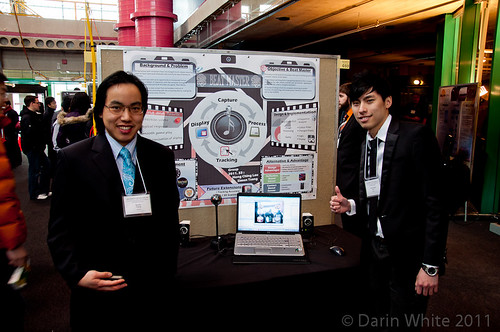

These guys built a “voice information scraper” that applied voice recognition to corporate earnings audio data (from a call or presentation) and would then transcribe the data to accelerate business decision making. It was challenging to use real world data due to the limitations of the voice recognition library.

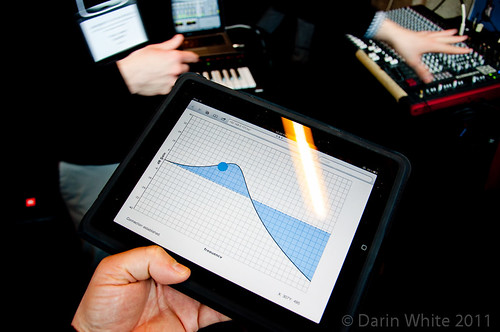

I liked this one as soon as I saw a keyboard and mixer.

On the iPad, you can wirelessly define a frequency response curve that modifies music playing through the system on the fly.

A new tool for DJ’s.

The “Air Piano” utilizes resistive flex sensors on the back of the glove fingers to detect a virtual keypress…

and was designed by these guys. I was hoping for a demo, but the prototype had been “overused” by the time I got there at noon. Murphy’s Special Law for Demos.

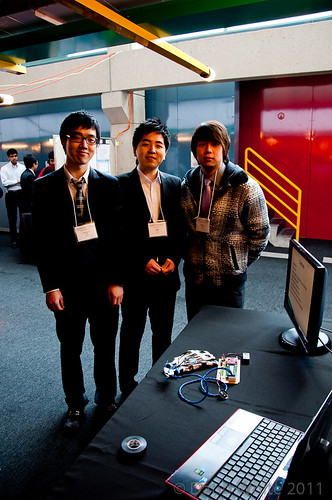

HeadMonster featured an array of ultrasonic transducers overhead to receive pings from a headphone-mounted ultrasonic transmitter. From that the system could (in a more optimal setting but not in demo today) determine position of the user within the space and generate localized sound that would be played through the user’s headphones.

The guys noted problems of environmental vibrations messing with their sensor array. These are the best learnings.

Davis Centre was absolutely rockin’ at lunch. I was only able to spend an hour here and barely scratched the surface. Tons of projects.

Great use of reflective infrared light sensors here across two beams to determine the vector for the puck and robotically block the shot.

This is a prototype-scale touch-sensitive and skinable keyboard…

designed by these guys. In a production-quality design, each key would feature a touch-sensitive LCD that could display arbitrary graphics. The keys taken together could replace the functionality of a touchpad.

Rumeesa is not casting a spell here…

She is playing these virtual drums via image tracking. This looked so fun I even put the camera down for a minute so I could try it.

Great job, guys.

Awesome to be so close to campus for brain snacks like this. The Nanotech Eng department’s symposium is on Friday, so be sure to check that out.

Happy making,

DW

Great !

Thank you for sharing. I would be interested in the contact with the kinect/hypersonic projet if you can tell me.

@hugobiwan

Pingback: Making for the masses–a UW study kicks off | makebright